SSNet reduces the network complexity and computations by drawing on the advantages of U-Net and ResNet, and improves the detection accuracy.

#DEEP RESIDUAL LEARNING FOR IMAGE RECOGNITION SERIAL#

To address these issues, we propose a deep residual learning serial segmentation network called SSNet, an end-to-end semantic segmentation network, to extract buildings from high spatial resolution remote sensing imagery. However, most contributions require complex structure and a big number of parameters which lead to redundant computations, and limit the application of the models. Many published papers have applied deep CNNs to remote sensing successfully. In recent years, convolutional neural networks (CNNs) have made remarkable progress in computer vision. The huge differences in the appearance and spatial distribution of man-made buildings make it a challenging issue. the speed of converge in residual network is faster than plain network in the begining stage.Extracting buildings from high spatial resolution remote sensing imagery automatically is considered as an important task in many applications.

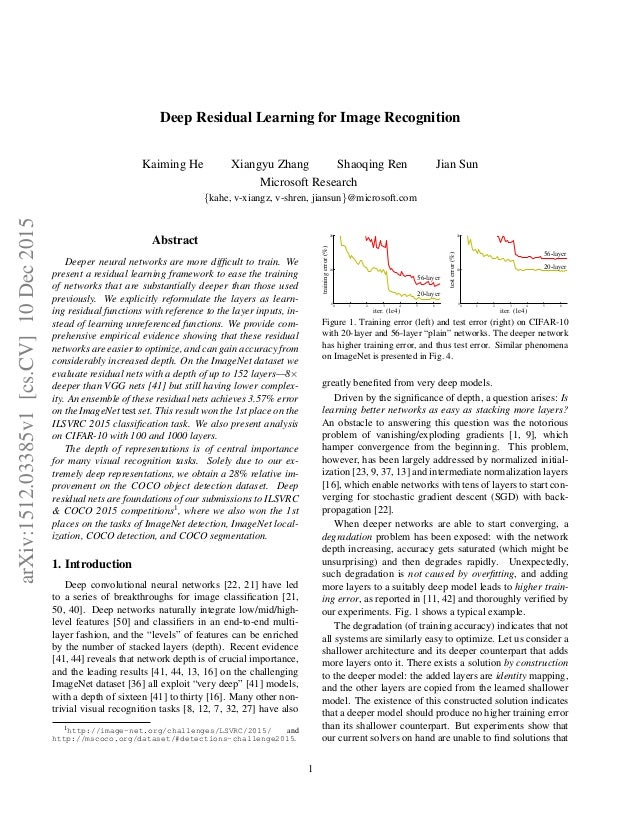

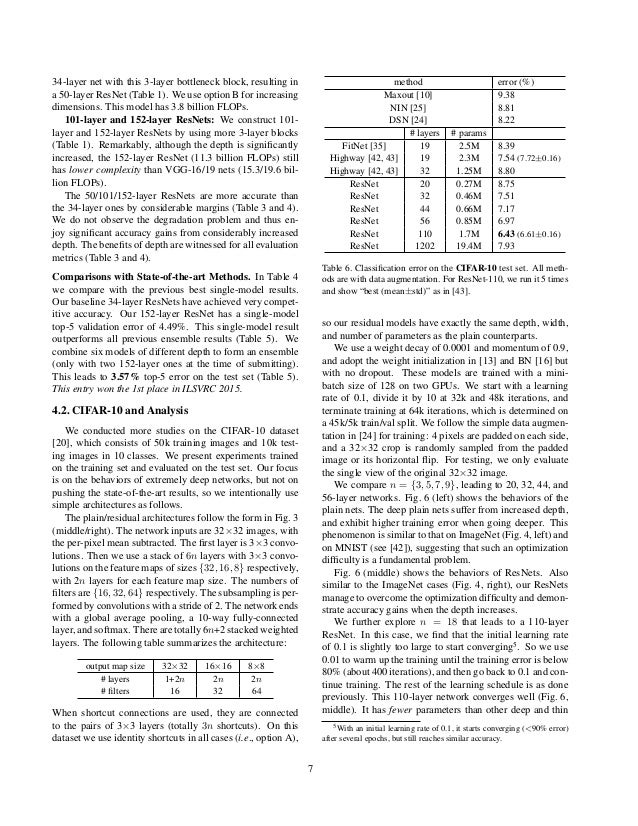

By contract, error rate up as the number of layers in plain network increase. error rate goes down as the number of layers in residual network increase. **don't use dropout**, because the percentage of parameters in the fully connected layer is low. weight decay of 0.0001 and a momentum of 0.9 use project matrix to make the dimension match between $F(x)$ and $x$ identity mapping doesn't increase the parameter in the network add some shortcuts and turn it into residual counterpart version If F has only a single layer, is similar to a linear layer: $y = W_x+x$, for which we have not observed advantages. use a project function $W_s$ to change the dimesion of x the dimension of F may different from x deeper network must be better than shallow counterpart powerful shallow representations (more effective) higher training error when the depth increases can easily enjoy accuracy gains from greatly increased depth To the extreme, if an identity mapping were optimal, it would be easier to push the residual to zero than to fit an identity mapping by a stack of nonlinear layers. it is easier to optimize the residual mapping than to optimize the original, unreferenced mapping. Add neither extra parameter nor computa-tional complexity. Be considered as feedforward neural networks with shortcut connections recast original underlying mapping into $H(x) = F(x) + x$ stacked nonlinear layers fit another mapping of $F(x) := H(x) - x$ let these layers fit a residaul mapping instead of a desired underlying mapping But experiments show that **current solvers are unable to find solutions** that are comparably good or better than the constructed solution or **unable to do so in feasible time**. the rest layers are thet shallower architecture value The existence of this constructed solution indicates that a deeper model should produce no higher training error than its shallower counterpart consider shallower architecture and its deeper counterpart that adds more layers onto it Indicate that not all systems are similarly easy to optimize because it got the low training accuracy With the network depth increasing, accuracy gets saturated and then degrades rapidly. With layers deeper, network gets higher error rate

with the increase of the neural network layers, the gradient become too small to allow network evolve Different between Vanishing Gradient and Degradation network depth is of crucial importance, and the leading results gain accuracy from considerably increased depth Deeper neural networks are more difficult to train > Kaiming He, Xiangyu Zhang, Shaoqing Ren, Jian Sun Deep Residual Learning for Image Recognition